Remote Sensing

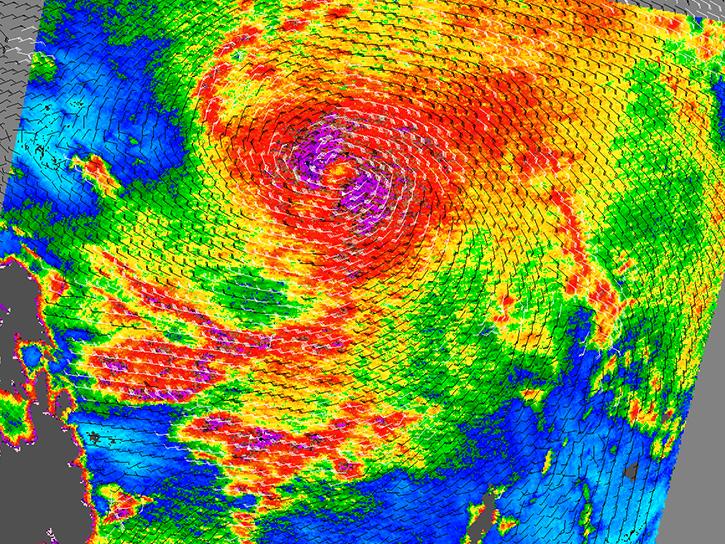

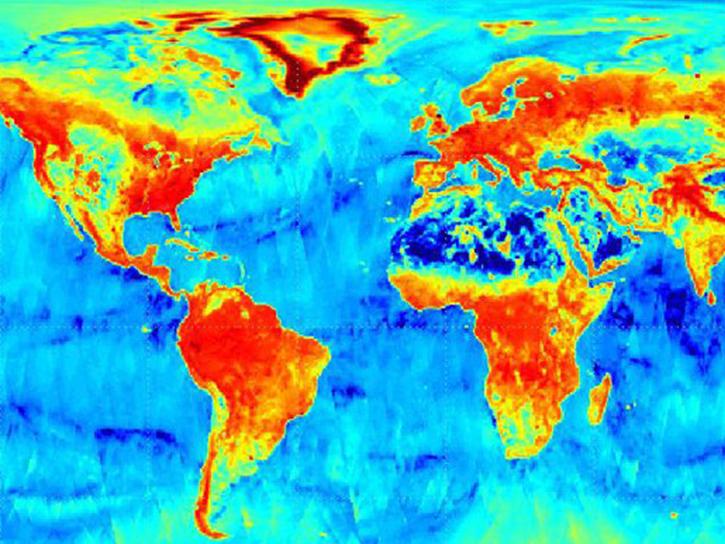

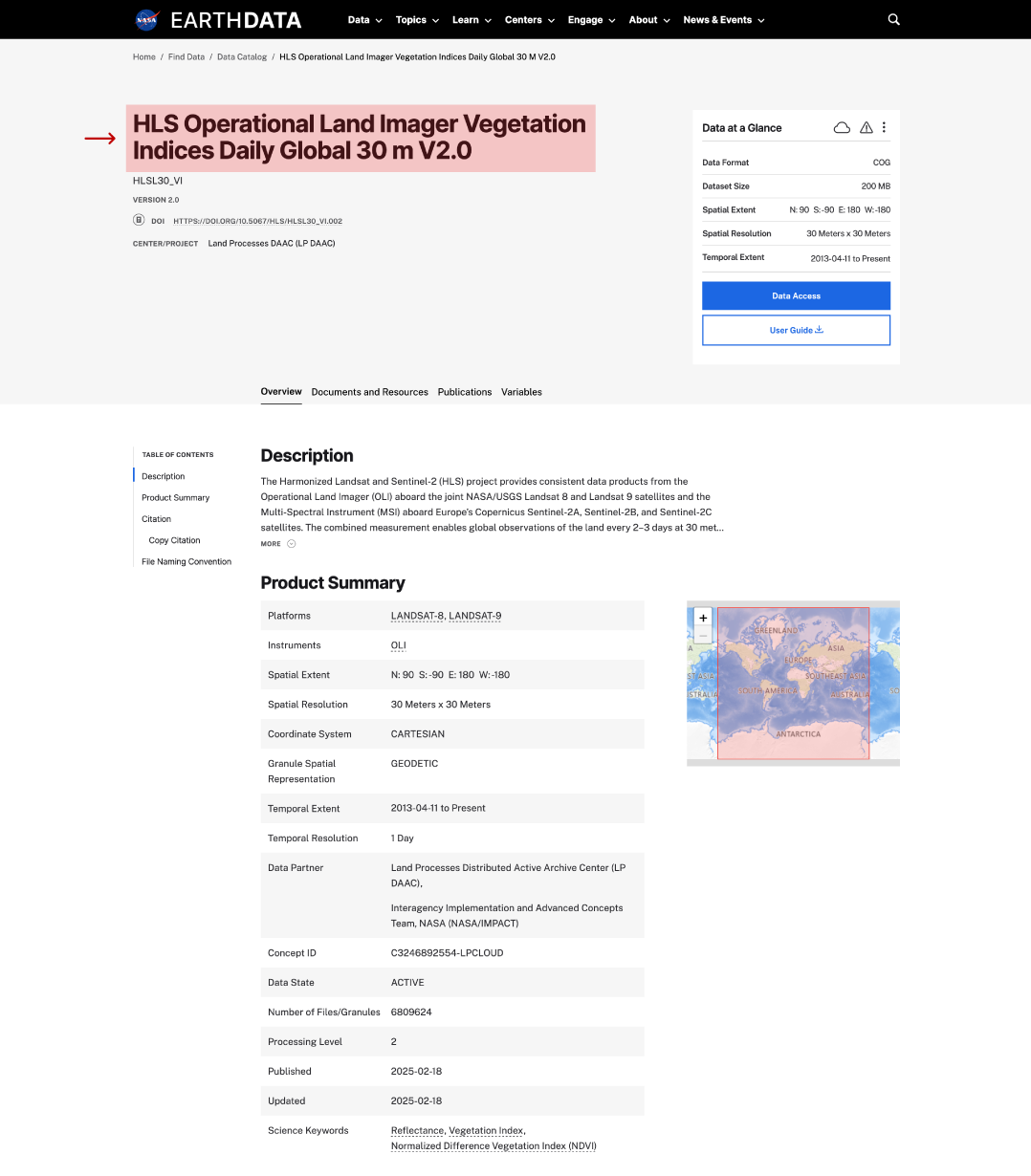

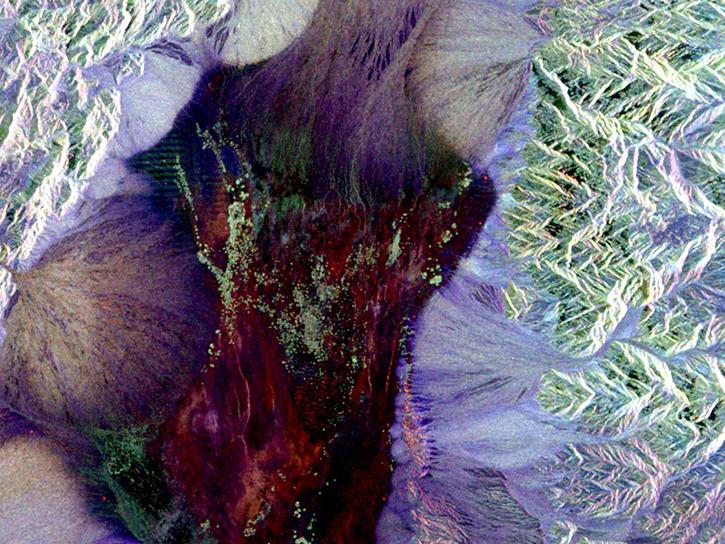

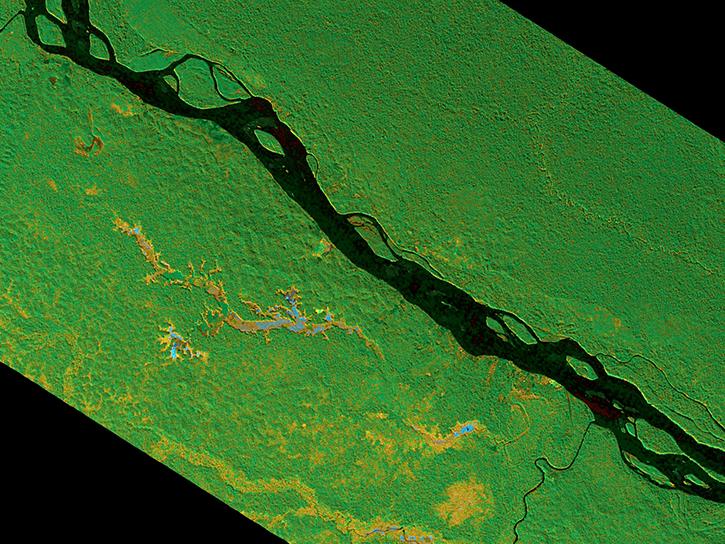

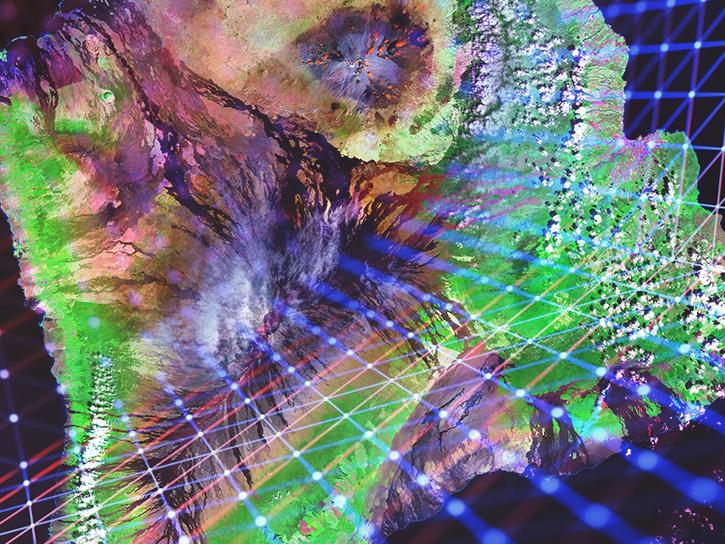

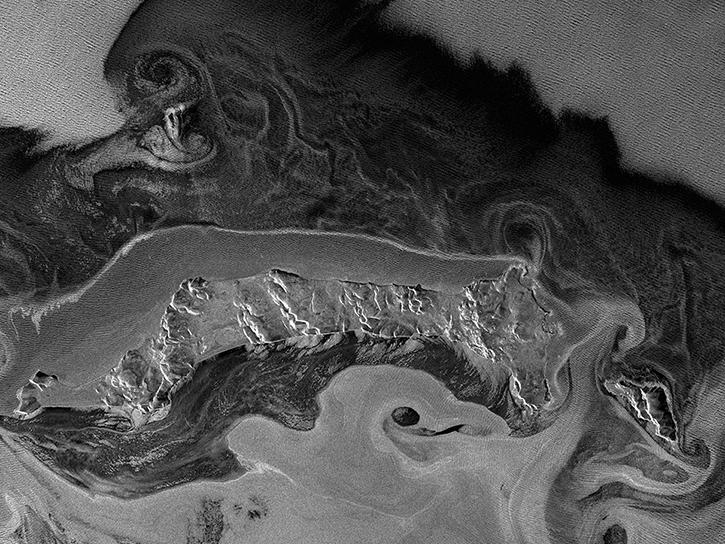

Remote sensing is the acquiring of information from a distance. NASA observes Earth and other planetary bodies via remote instruments on space-based platforms (e.g., satellites or spacecraft) and on aircraft that detect and record reflected or emitted energy. Remote instruments, which provide a global perspective and a wealth of data about Earth systems, enable data-informed decision making based on the current and future state of our planet.

For more information, check out the Fundamentals of Remote Sensing training from the Applied Remote Sensing Training (ARSET) program.